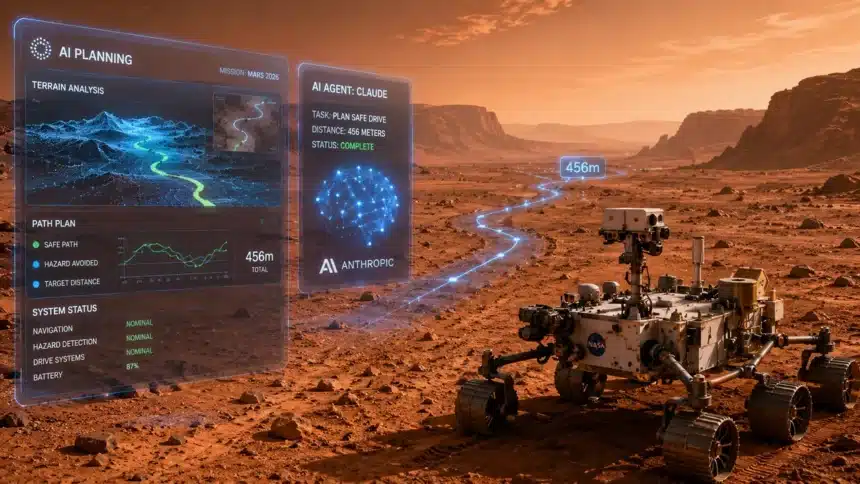

NASA’s Perseverance rover completed the first Mars drives ever planned autonomously by an artificial intelligence system, using Anthropic’s Claude vision language model to analyze orbital imagery and generate safe waypoints across 456 meters of Martian terrain. The test, which ran without any human generated route waypoints, is the most operationally significant deployment of large language model AI in space exploration to date. What it proves about Claude’s capabilities, about AI reliability in high stakes autonomous systems, and about the future of planetary exploration is worth examining carefully beyond the headline.

- Distance covered: 456 meters of Mars terrain navigated using AI generated waypoints

- AI system: Anthropic’s Claude vision language model

- Input data: Orbital imagery from Mars Reconnaissance Orbiter analyzed by Claude to identify safe driving paths

- Human involvement: Engineers verified the AI generated plan before execution but did not generate the route waypoints themselves

- Vehicle: Perseverance rover, operating in Jezero Crater, Mars

- Significance: First Mars drives fully planned by AI rather than by human mission controllers

How the Autonomous Planning System Works

Mars rover navigation has always involved human planners. Mission controllers on Earth receive images from the rover and from orbital satellites, analyze the terrain, identify safe paths, generate waypoints, and uplink those commands to the rover. The round trip communication delay between Earth and Mars ranges from 3 to 22 minutes depending on orbital positions. That delay means mission controllers plan driving sessions that the rover executes hours later, often without the ability to intervene if unexpected obstacles appear.

The Claude based autonomous planning system changes the role of human controllers in the waypoint generation step. Instead of controllers manually identifying safe paths through orbital imagery, Claude’s vision language model analyzes the orbital photographs, identifies terrain features including rocks, slopes, and sand traps that pose hazards to the rover’s six wheel drive system, and generates a sequence of waypoints that route the rover safely through the environment. Human engineers review the AI generated plan before execution, but they are checking a finished product rather than generating the route themselves.

The technical capability that makes this possible is Claude’s ability to reason about spatial relationships in images. Identifying a safe path through rocky Martian terrain requires not just object detection, which simpler vision systems can perform, but contextual reasoning about what the detected objects mean for a vehicle with specific mobility constraints. A rock that is safely traversable at a 10-degree approach angle might be impassable at a 40-degree approach angle. A slope that looks gentle in orbital imagery might be steep enough to create a rollover risk when combined with the loose sand texture visible in adjacent pixels. Claude’s spatial reasoning capabilities are what allow it to generate routes that account for these contextual factors rather than just identifying hazard locations in isolation.

Why 456 Meters Matters

The 456-meter distance is not chosen arbitrarily. Typical Perseverance driving sessions range from 50 to 150 meters. A 456-meter session is a long drive by current mission standards, covering varied terrain that requires reasoning about multiple hazard types across the full route. Planning a 456-meter drive safely requires the AI to maintain consistent reasoning quality not just for the first waypoint but across the full sequence of decisions that compose the route.

That consistency requirement is where many AI systems fail in real world deployments. A system that reasons correctly about terrain 90 percent of the time fails catastrophically in a 456-meter drive because the 10 percent failure cases generate waypoints that the rover attempts to execute in the real Martian environment. Mission controllers reviewing the AI generated plan would catch obvious failures, but subtle reasoning errors that look plausible from orbital imagery but create hazards in real terrain are exactly the type of failure that is hardest to catch in review. The successful 456-meter execution is evidence that Claude’s reasoning was not just plausible but accurate across a sustained and varied planning challenge.

What Claude’s Vision Capabilities Add That Simpler Systems Cannot

NASA’s rovers have had on board autonomous navigation capabilities for years through software like AutoNav. Those systems use stereoscopic cameras mounted on the rover itself to detect obstacles in real time and stop or reroute around them during execution. The Claude based system does something different and complementary: it plans the full route before execution begins, using orbital imagery that provides a much wider field of view than the rover’s onboard cameras.

The difference between the two approaches is the planning horizon. Rover mounted AutoNav can avoid an obstacle that appears directly in front of the rover. Claude’s orbital planning can route around a field of hazardous rocks that extends 200 meters ahead, choosing a path that avoids the entire obstacle field rather than reacting to each individual rock as it becomes visible. That wider planning horizon allows longer, more efficient drives with less stopping and rerouting during execution.

The combination of orbital AI planning and rover based reactive navigation creates a two layer safety system: the AI plan avoids known hazards identified from orbit, and the rover’s onboard systems handle any unexpected hazards that the orbital imagery did not resolve clearly enough to plan around. That layered approach is more well built than either system alone.

The Broader Context for AI in Space Exploration

The Perseverance test is the highest profile deployment of large language model AI in space, but it is part of a broader wave of AI integration into scientific instrument operation and mission planning. Data volumes from planetary science instruments have grown faster than the human analyst capacity to process them, creating a bottleneck that AI tools are filling. The James Webb Space Telescope generates more spectroscopic data than astronomers can manually analyze. Mars missions generate more imagery than human terrain analysts can process. AI systems that can analyze scientific data at the speed it is generated and surface the most important findings for human review are becoming essential infrastructure for operating at the current frontier of space science.

NASA’s adoption of Anthropic‘s Claude specifically reflects the agency’s assessment of which AI systems have the safety and reliability characteristics required for high stakes applications where errors are expensive or irreversible. A failed Mars drive that damages the rover costs hundreds of millions in mission capability. NASA’s choice of Claude for a 456-meter autonomous drive test is an implicit certification that the system meets the agency’s confidence threshold for deployment in an environment where there is no opportunity to fix mistakes after the fact.

The AI governance implications are also notable. Major AI companies including Anthropic, Microsoft, and OpenAI have agreed to government pre release testing frameworks, as TCB is reporting separately today in its analysis of the seven major AI moves defining May 2026. NASA’s Perseverance test is a high profile demonstration that AI systems can be evaluated and validated for specific high stakes use cases through rigorous testing before deployment. That demonstrated process for AI safety validation in practice is exactly the model that regulatory frameworks are trying to formalize across other domains.

What This Means for Anthropic

The NASA deployment adds a category of validated capability to Claude’s public track record that goes beyond the enterprise software deployments in finance and legal that have been the primary evidence base for Anthropic’s enterprise AI claims. Space navigation and terrain analysis require a different type of reasoning than contract review or financial analysis. The common thread is that all three require accurate, consistent reasoning under conditions where errors have real consequences. NASA’s deployment is evidence that Claude’s reasoning quality holds in a new domain under operational conditions rather than just benchmark conditions.

Anthropic’s $200 billion infrastructure commitment with Google Cloud, reported separately in today’s TCB coverage, is the capacity investment that makes it possible to serve high stakes deployments like NASA’s alongside the hundreds of enterprise customers that the company has been expanding into at Goldman Sachs, Blackstone, and other major institutions. The NASA deployment and the Goldman Sachs integration are both evidence of the same underlying capability: sustained, accurate reasoning across complex, high stakes tasks where the consequences of errors are severe. That capability, at enterprise scale, is what Anthropic is building infrastructure to serve.

The TCB View

The 456-meter Claude planned Mars drive will be remembered as a milestone for AI autonomy in the same way that the first autonomous car drive on public roads or the first AI victory over a world champion in a complex strategy game was remembered: as the moment when a theoretical capability became a demonstrated operational fact. The technology has now planned and executed the longest autonomous drive in the history of Mars exploration, in one of the most hostile environments on a neighboring planet, successfully.

The question that follows from this demonstration is not whether AI can do this. It can. The question is how quickly AI planned autonomous operation will become the standard approach for planetary exploration rather than the exception. The answer, given how rapidly the capability has moved from research to operational deployment in 2025 and 2026, is probably faster than mission planners are currently assuming.

Free Daily Briefing

Get the Daily Briefing

Crypto, AI, and Web3 intelligence. Free, every day.

The Daily Brief by TCB

Crypto, AI & finance intelligence in 5 minutes. Every weekday morning. Free.